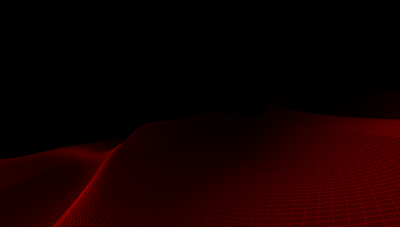

If you are paying attention close enough, you might have noticed that in the background of this blog is a very simple and strightforward wave simulation using GLSL or WebGL. It has specifically been written in accordance with the OpenGL Shading Language, Second Edition [1,2] text in mind so it is compatible with every single WebGL implementation ever conceived, and is almost completely decoupled from the browser. It has some intentional 'glitch' in it, which is a reference to the analog days of Sutherland's work.

Specifically, the simulation uses a textbook implementation of GLSL, as follows:

- GLSL 1.2

- OpenGL ES 2.0

- WebGL 1.0

The only coupling to the browser is the opening of a GL context, and if one clicks on the animation in the right place, an "un-project" operation that unwinds the Z-division takes place so that the fragment that underlies the mouse cursor can be calculated and the scene can be rotated using a very primitive rotation scheme which includes gimble lock (no quarternions here!). Both are extrordinarilly simple 3D graphics operations that should not affect rendering at all and is the absolute minimum level of coupling one might expect. In short, it is the perfect test of the most basic of WebGL capability.

The un-project operation is written with the minimal amount of code required and uses a little linear algebra trick to do it very efficiently. Feel free to inspect the code to see how it's done.

Current State

Update 26-Jun-23: After much trial and error, and testing on many many devices, I now have successfully isolated three separate WebGL bugs. Now that I have the three bugs properly isolated, I'm starting the writeup and hope to submit the following bug reports in the next week or two, as follows:TBC...

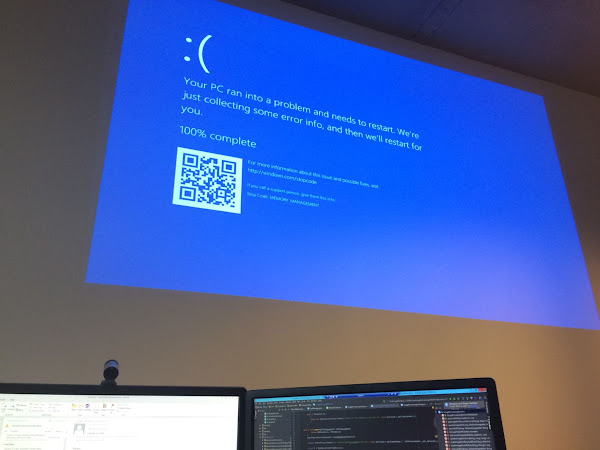

- Incorrect rendering of WebGL 1.0 scene in Google Chrome

- WebGL rendering heisenbug causes GL context to crash in Chrome after handling some N fragments

- WebGL rendering heisenbug causes GL context to incorrectly render scene after handling some N fragments

The current state as at 14-March-2023 is that Chrome and other browsers are not able to run this animation for more than 24 hours without crashing, and on the latest versions of Chrome released early March, the animation has now slowed down to ridiculous FPS levels. Previously the animation ran at well over 30 FPS on most devices, but would crash after 24 hours.

This animation will quite happily run on an old iPad running Safari, however Chrome currently seems to be struggling. The number of vertices and the the number of sin() operations that it needs to calculate is well within the capabilities of all modern processors, including those found in i-devices such as phones and tablets on which one can play a typical game.

Brave Browser (based on Chromium) renders correctly, but is horrendously slow in the GA branch (Beta currently works fine). I'm not sure if this is a Linux vs. Windows issue or discrete vs. embedded GPU at this stage, will investigate further when I have time.

NB. As at 14-March-2023, on Brave Browser 1.49.120 on Windows with a Discrete GPU the simulation struggles to render 5 FPS, and on Brave Browser 1.50.85 (beta) on Linux with an embedded GPU it works OK, but I can point to other vizualisation artefacts elsewhere that 1.50.85 cannot handle (but which previous versions of Brave/Chrome could), for example, on the homepage of vizdynamics.com, the Humanized Data Robot should gently move around the screen with a slight parallax effect if one moves the mouse over it. Why is the rendering engine in Chrome suddenly regressing, and why is it not using the GPU? This wave simulation should be able to run in it's entirety on SIMD architecture and the Humanized Data Robot used to be rendering flawlessly. What is going on?

At 30 FPS, the wave simulation requires around 75 MFLOPS of processing power. To put that into perspective, the first Sun Microsystems SPARC Station released in 1989 was able to calculate 16.2 MIPS (similar to MFLOPS), and the SPARC Station 2 (released 1991) could calculate nearly 30 MIPS. That was over 30 years ago, and a SPARC Station 2 machine had enough compute power that it could happily calculate the same wave simulation at around 10-15 FPS without vizualising it, but actually at 2-5 FPS once one implements a GPU pipeline (thank god SGI bought out IRIS GL).

I still have my copy of the original OpenGL Programming Guide (1st edition, 6th printing) that came with my Silicon Graphics Indy workstation. It was a curious book, and I implemented my first OpenGL version of these wave simulations in 1996 according to it, so I'm quite familiar with what to expect. An Indy could handle this - with a bit of careful tuning - quite well. The hardness of this vizualisation is the tremendous number of sin() operations, and the cosine()s used to take their derivative, so it really does test the compute power of a graphics pipeline quite well - if the machine or the implementation isn't up to it, these calculations will bring it to it's knees quite quickly.

Fast-forward to 2023, and a basic i7 cannot run the simulation at 30FPS! To put things into perspective, a 2010 era Intel i7 980 XE is capable of over 100 GFLOPS (about 1000x more processing power than whats required to do 75 MFLOPS), and that's without engaging any discrete or integrated SIMD GPU cores. Simply put, the animation in the background of this blog should be trivial for any computing device available today, and should run without interruption.

Lets see how well things progress through March and if things improve.

Update 30-Jan-24: Adding an FFT to make the wave simulation faster makes the problem go away, or potentially causes it to take longer to crash. Dunno.

Have asked the Brave team to look into it:

Hey @brave @BraveSupport @BrendanEich While I have your attention:

— AP on CompSciFutures (∀/acc) (@CompSciFutures) January 29, 2024

I have isolated the long-standing bug with long running WebGL memory leaking till the browser crashes in Chromium on some GPUs. #SIGGRAPH @siggraph

More info here - please submit to the Chromium team, I…

References

[1] Rost, Randi J., and John M. Kessenich. OpenGL Shading Language. 2nd ed. Addison-Wesley, 2006. OpenGL 2.0 + GLSL 1.10

[2] Shreiner, Dave. OpenGL Programming Guide the Official Guide to Learning OpenGL, Version 2. 5th ed. Upper Saddle River, NJ u.a: Addison-Wesley, 2006.