Oh dear, the game is up. Our big secret is out. We should have a parade.

The Future of Modernity

This year is looking like when computer scientists come out and confess that the world

is undergoing a huge technology driven revolution based on simple probabilities. Or perhaps

it's just that people have started to notice the rather obvious impact it is making on their

lives (the hype around the recent

DARPA Robotics Challenge

and Christine Lagarde's

entertaining lecture last month

are both marvelous example of that).

This change is to computer science what quantum mechanics was to physics: a grand shift in

thinking from an absolute and observable world to an uncertain and far less observable one.

We are leaving the digital age and entering the probabilistic one. The main architects of

this change are some very smart people and my favorite super heroes - Daphne Koller, Sebastian

Thrun, Richard Neapolitan, Andrew Ng and Ron Howard (no not the Happy Days Ron –

this one).

Behind this shift are a clique of innovators and ‘thought leaders’ with an amazing vision of the

future. Their vision is grand and they are slowly creating the global

cultural

change

they need to execute it. In their

vision, freeways are close to 100% occupied, all cars travel at maximum

speed and the population growth declines to a sustainable level.

This upcoming convergence of population to sustainable levels will not come from

job-stealing

or

killer robots,

but from increased efficiency and the better lives we will all live, id est, the

kind of productivity increase that is

inversely proportional

to population growth.

And then world is saved... by computer scientists.

What is it sort of exactly-ish?

Classical computer science is based on very precise, finite and discrete things, like counting pebbles,

rocks and shells in an exact manner. This classical science consists of many useful pieces such as the

von-neumann architecture,

relational databases, sets, graph theory, combinatorics, determinism, greek logic, sort + merge, and

so many other well defined and expressible-in-binary

things.

What is now taking hold is a whole different class of computer-ey science, grounded in probabilistic

reasoning and with some other thing called information theory thrown in on the sidelines. This kind of

science allows us to deal in the greyness of the world. Thus we can, say, assign happiness values to

whether we think those previously mentioned objects are in fact more pebbly, rocky or shelly given what

we know about the time of day and its effect on the lighting of pebble-ish, rock-ish and shell-ish

looking things. Those happiness values are expressed as probabilities.

The convenience of this probability-based framework is its compact representation of what we know,

as well as its ability to quantify what we do not(ish).

Its subjective approach is

very unlike

the

objectivism

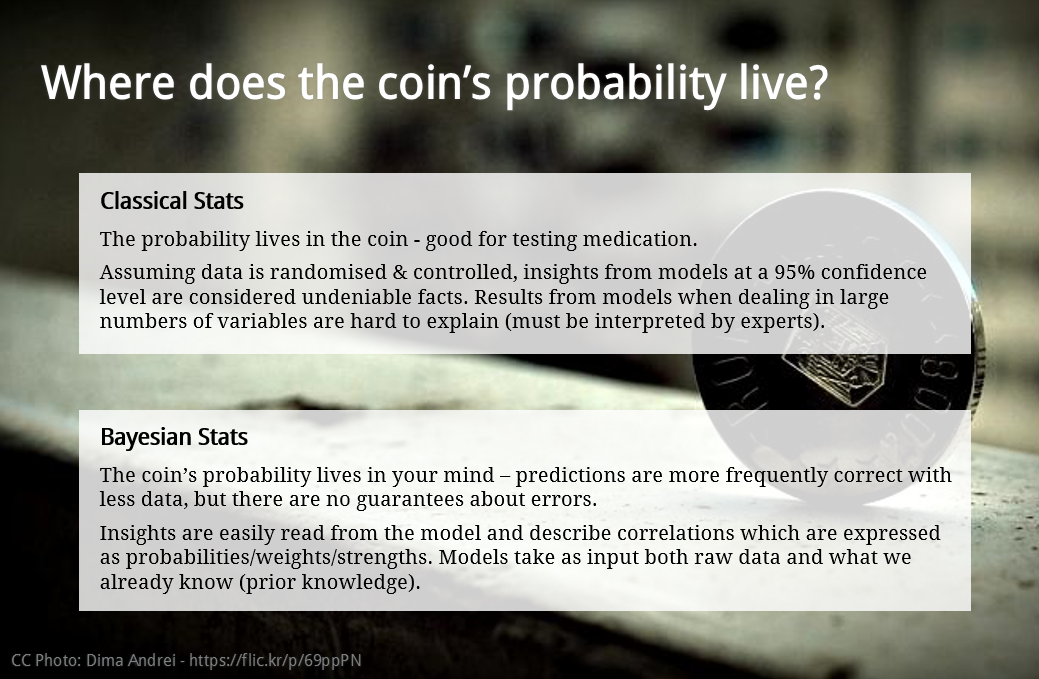

of classical stats. In classical stats,

we are trying to uncover a pre-existing independent, unbiased assessment. In the Bayesian or probabilistic

world bias is welcomed as it represents our existing knowledge, which we then update with real data.

Whoa.

This paradigm shift is far more general than just the building of robots - it's

changing the world.

I shall now show you the evidence so you may update your probabilities

A testament to the power of this approach is that the market leaders in many tech verticals already have

this math at their heart. Google Search is a perfect example - only half of their rankings are

PageRank based. The rest is a big probability model that converts your search query into a machine-readable

version of your innermost thoughts and desires (to the untrained eye it looks a lot like magic).

If you don’t believe me, consider for a moment, how does Facebook choose what to display in your own feed?

How do laptops and phones interpret gestures? How do handwriting, speech and facial recognition systems work?

Error Correction?

Chatbots?

Emotion recognition?

Game AI?

PhotoSynth?

Data

Compression?

It’s mostly all the same math. There are other ways, which are useful for some sub-problems, but they can

all ultimately be decomposed or factored into some sort of Bayesian or Markovian graphical probability model.

Try it yourself: Pickup your iPhone right now and ask the delightful Siri if she is probabilistic, then

assign a happiness value in your mind as to whether she

is.

There, you are now a Bayesian.

APAC is missing out

Notwithstanding small pockets of knowledge, we don’t properly teach this material in Australia,

partly because it is so difficult to learn.

We are not alone here. Japan was recently struck down by this same affliction when their robots could

not help to resolve their Fukushima disaster. Their classically trained robots

cannot cope

with changes to their environment that probabilities so neatly quantify.

To give you an idea of how profound this thesis is, or how far and wide it will eventually travel, it

is currently taught by the top American universities across many faculties. The only other mathematical

discipline that has found its way into every aspect of science, business and humanities is the Greek logic,

and that is thousands of years old.

A neat mathematical magic trick

The Probabilistic Calculus subsumes Greek Logic, Predicate Logic, Markov Chains, Kalman Filters, Linear Models, possibly even Neural Networks; that is, because they can all be expressed as graphical probability models. Thus logic is no longer king. Probabilities, expected utility and value of information are the new general purpose ‘Bayesian’ way to reason about anything, and can be applied in a boardroom setting

as effectively as in the lab.

One could build a probability model to reason about things like love, however it's ill advised. For

example, a well-trained model would be quite adept at answering questions like “what is the probability

of my enduring happiness given a prospective partner with particular traits and habits.”

The ethical dilemma here is that a robot built on the Bayesian Thesis is not thinking as we know it –

it's just a systematic application of an ingenious mathematical trick to create the appearance of thought. Thus for some things, it simply is not appropriate to pretend to think deeply about a topic; one must actually do it.

We need bandwidth or we will devour your 4G network whole

These probabilistic apps of the future (some of which already exist) will drive bandwidth-hogging monsters

(quite possibly literally) that could make full use of low latency fibre connections.

These apps construct real-time models of their world based on vast repositories of constantly updated knowledge stored

‘in the cloud’.

The mechanics of this requires the ability to transmit and consume live video feeds, whilst simultaneously

firing off thousands of queries against grand mid- and big-data repositories.

For example, an app might want to assign probabilities to what that shiny thing is over there, or

if its just sensor noise, or if you should buy it, or if you should avoid crashing into it, or if

popular sentiment towards it is negative; and, oh dear, we might want to do that thousands of times

per second by querying Flickr, Facebook and Google and and and. All at once. Whilst dancing. And

wearing a Gucci augmented reality headset, connected to my Hermes product aware wallet.

This repetitive probability calculation is exactly what robots do, but in a disconnected way. Imagine

what is possible once

they are all connected

to the cloud. And then to each other. Then consider how much bandwidth it will require!

But, more seriously, the downside of this is that our currently sufficient 4G LTE network will

very quickly be overwhelmed by these magical new apps in a similar way to how the iPhone crushed our

3G data networks.

Given that i-Devices and robots like to move around, I

don't know

whether FTTH would be worth the expense, but near-FTTH with a very high performance wireless local

loop certainly would help here. At some point we will be buying

Hugo Boss branded Occulus Rift VR headsets,

and they need to plug into something a little more substantive than what we have today.

Ahh OK, what does this have to do with advertising?

In my previous post I said I would be covering advertising things. So here it is if you haven't

already worked it out: this same probability guff also works with digital advertising, and

astonishingly well.

There I said it, the secret is out. Time for a parade.

...some useful bits coming in the next post.